The cost of electricity has become daily news fodder and looks like it will be for some time to come.

In this article I want to focus on the costs of generating electricity from different sources and try to unpick a few fallacies and misuses of measures commonly used to represent these costs.

Supply = Demand

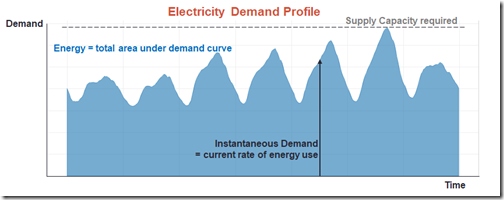

The first thing to remember is that unlike many goods and most physical commodities, electrical energy is not produced in the form of “widgets” which can be separately produced, delivered, and used at different times and different rates. Essentially in any large electricity system all three actions – production, delivery, and usage – happen instantaneously and at the same rate (in aggregate). If they don’t – the lights go out.

Even where a system has the capability to store energy in the form of pumped hydro or batteries, to pick a couple of topical technologies, electrical energy first gets converted to another form – gravitational potential by pumping water uphill, or chemical energy by recharging a battery – and then reconverted to electrical energy when it’s used. So as far as electricity itself is concerned, production delivery and usage still happen all at once.

Because its production and usage are inextricably tied together, we can’t just make electricity in the most “cost-efficient” way at times that best suit production – whether that’s from “baseload” power stations running constantly 24/7 (which of course they don’t – there’s maintenance, and also “unplanned outages” – i.e. breakdowns), or from windfarms and solar panels as and when wind and sunshine allow – and then use what’s previously been made when we need to. The rate at which we make it must exactly match the rate at which we use it, second to second.

And as everyone knows we don’t use electricity anything like uniformly:

This has very important implications for working out the real costs of electricity production.

LCOE and its Assumptions

Unfortunately the most common metric used to compare costs of different forms of generation – and this applies in industry literature, not just the popular press – completely skips over this fundamental truth.

This measure goes by various names but often it’s called the Long-run (or “Levellised”) Cost Of Electricity – “LCOE”. In principle, it’s a simple yardstick that attempts to combine the various types and levels of cost involved in generating power into a single dollar figure, and divide this figure by a quantity of electricity generated to produce a per-unit cost of electrical energy, usually expressed in units of dollars per megawatt hour ($/MWh).

As the “Long-run” part of the name suggests, a feature of this metric is an attempt to attribute or spread the large up-front investment, or capital costs of power generation technologies to amounts of energy produced, across long plant lifetimes – decades in most cases.

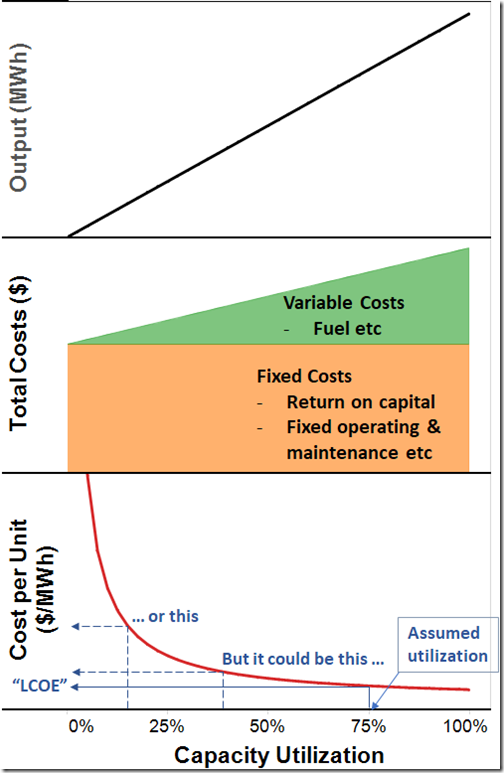

In doing this, as well as capturing the different types of cost involved, the calculations behind the measure rely on some critical assumptions about the rate of return on investment (or discount rate in financial engineering terms), and capacity utilization, i.e. energy production relative to maximum capacity, for different technologies.

What LCOE measures usually do is express all costs of building owning and running a power station of a given megawatt capacity in dollars per year (using the rate of return to convert upfront capital costs to an annual equivalent), and divide this by output in megawatt hours per year – which comes from an assumption about capacity utilization.

Because a large proportion of the total costs of building owning and operating any form of generation are fixed, the per-unit cost of production varies strongly and inversely with this assumed capacity utilization, as illustrated on the chart below:

And this is the root of a key problem with LCOE in the way we often see it employed. Because electricity has to be made as it is used, we simply can’t assume some particular fixed capacity utilization factor for a specific technology or power station to get an output figure, divide that into costs for that same time period, and arrive at a number that can be blithely thrown about as a meaningful comparator between technologies, or that yields much insight into that technology’s impact on the overall cost of production in a real-world electricity system.

System Realities

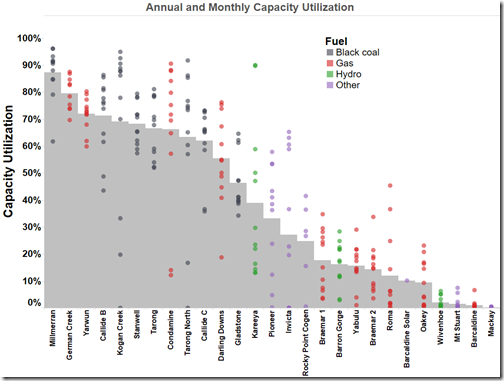

The need to generate electricity only as it used, and the widely varying rate of that usage mean that regardless of technology, the capacity utilization of generators fluctuates enormously across the fleet at any one time (so at any point in time some are running at maximum capacity and others not at all), and utilization for any given generator also varies across time.

Any real-world fleet will comprise some generators which usually run at high utilization and others which run at relatively low and quite variable utilization rates. The chart below shows the range of capacity utilization rates (monthly and annual) applying to the Queensland generation fleet in 2016. Simple cost of production measures like LCOE that ignore this reality by assuming a single fixed capacity utilization can therefore be highly misleading.

A related problem with LCOE measures in application to real world systems is that if we add a new power station to an existing system, this will reduce the capacity factors of some or all of the other power stations in the fleet (because total production must still equal demand) and therefore increase their unit costs of production.

So drawing conclusions about impacts on system average costs on the basis of LCOE measures is fraught.

And impacts on market prices will be different again because market prices tend to reflect short run variable costs and overall supply demand balance rather than total costs.

Dispatchability

A third justified criticism of LCOE focuses on the issue of “dispatchability”. This criticism points out that because of the need to continuously balance supply and demand there is a difference in value to an electricity system of generation sources whose output can be called up and controlled (“dispatched”) anywhere between some minimum value (ideally zero) and maximum capacity, versus those whose output is largely determined by non-controllable factors like wind and sunshine. Simple LCOE measures totally ignore this complication.

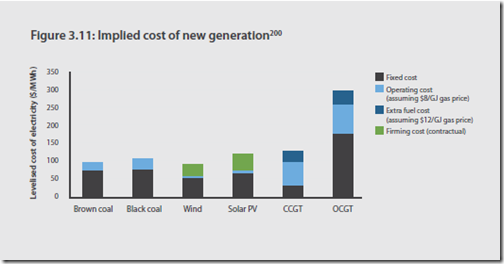

There are sometimes extensions or modifications of the LCOE concept proposed to factor in “firming costs” for non-dispatchable technologies. This might involve adding in costs for a storage capability attached to a non-dispatchable source, or extra costs for a certain “matched” quantity of dispatchable generating capacity to supplement variable output from the non-dispatchable source. Finkel had a go at this:

But these approaches suffer from the same fundamental problem as LCOE – fixed and somewhat arbitrary assumptions (and more of them) about amounts of and utilization rates for the supplementing technologies, and for the overall utilization of the combination in a real-world system. These attempts may be directionally useful in at least highlighting that costs for dispatchable and non-dispatchable sources are not directly comparable, but they are necessarily imprecise (despite charts like the one above) and really do little to resolve which technology is “cheaper” – something LCOE is not much good for even when comparing one form of conventional dispatchable technology with another.

Here it’s worth reiterating that assumptions that dispatchable “baseload” generation technology like coal or combined-cycle gas plant can simply run at a preferred high capacity factor at all times and never break down – implicit in LCOE measures and absence of any “firming costs” for these technologies – are also inappropriate. In an all-coal or all-gas or all-anything system, relatively few generators would be able to run this way.

Why Bother with LCOE?

So what is LCOE good for? Predominantly for assessing trends in generation costs for a given technology type over time – and only for comparison between technologies where their cost structures and technical characteristics happen to be very similar, meaning that they will play similar roles in a real world electricity system. So pointing out a falling trend over time in the LCOE of solar PV technologies is a very valid use of the measure. Comparing the LCOEs of (say) sub-critical and ultrasupercritical coal, or even the LCOEs of coal with and without carbon capture and storage, is also generally valid (although there are even traps in this once we explicitly factor in a price on carbon emissions).

LCOE can also be a useful metric if you’re a project developer or asset owner fortunate enough to find a buyer for your power station’s output who’s willing to take on the risks of variable capacity utilization and market prices, and offer you a fixed price Power Purchase Agreement (PPA) for a relatively predictable quantity of production. In this case comparing the price struck in the PPA with the LCOE of your power station is a valid indicator of profitability.

But note that here it’s the buyer who takes on the risks that the value of that electricity to the system is markedly different from the PPA price or LCOE.

Alternatives

After all that bagging of that particular measure, what are the alternatives? This will have to wait for another article, but there certainly are better ways to evaluate the relative economic value of different generation technologies, as well as demand-side measures. And they all start with the recognition, unlike LCOE measures, that the costs of meeting electricity demand have to be evaluated on a system-wide basis, not by focussing simply on individual technologies or power stations.

About our Guest Author

|

Allan O’Neil has worked in Australia’s wholesale energy markets since their creation in the mid-1990’s, in trading, risk management, forecasting and analytical roles with major NEM electricity and gas retail and generation companies. He is now an independent energy markets consultant, working with clients on projects across a spectrum of wholesale, retail, electricity and gas issues.You can view Allan’s LinkedIn profile here.

Allan will be regularly reviewing market events here on WattClarity. Allan has also begun providing an on-site educational service covering how spot prices are set in the NEM, and other important aspects of the physical electricity market – further details here. |

Your chart of utilization has a buried story, because on the far left is the coal, and on the far right is the Wivenhoe PHES which is co-owned, and thus was elected to be less profitable by the coal-power-station owner. Who is in turn owned by the state.

The LCOE of the PHES element is pretty good: its amortized over the requirement for Wivenhoe to exist for flood mitigation. Its only the incremental cost of the PH part, the S part was there anway. So your chart shows how something which a really low PHES, can be turned into a non-earner, if the owner doesn’t want to use it.

Why doesn’t the owner want to use it? Because its only role would be to displace coal power. If it was either split off, or chinese walled, it would have run far more.

Thanks for the interesting comment, George

Some of our more learned readers might want to also add in their thoughts, but you raise the valid comment about portfolio effects in operational decisions.

That’s one reason I am intrigued by the possibilities of shifting Wivenhoe into the mooted “Clean Co” by the QLD Govt. This would potentially deliver both:

1) Market benefits, in terms of increased competition (which, as noted yesterday, has declined in QLD); and

2) A valuable opportunity for collective learning about a real world case study into the use of large-scale storage.

With particular respect to Wivenhoe, it’s important to remember that a true cost of production calculation would need to factor in both:

1) Cost of purchase of energy

Now this might have historically been done at “off-peak” times but, as noted last week, the pattern of pricing has shifted to make off-peak prices more expensive.

2) Round-trip efficiency

On top of this, my understanding is that the round-trip efficiency of the pumping-to-generation process is not as great as it might initially seem.

Hence (1) and (2) add up to a significant (and variable/hard-to-predict) short-run marginal cost of operations for Wivenhoe.

Hope this helps

Paul

As you point out with electricity supply and demand are inextricably linked so LCOE is only a useful measure if all are levellised. That means generators should only be permitted to supply levellised electrons, namely those they can reasonably guarantee (ie short of breakdown) 24/7 all year round.

LCOE as a measure was clearly only relevant to fossil fuel sources in the past but instantly became irrelevant with the enforcement of unreliables on the system. Now while unreliables may be able to outbid fossil fuels for pumped hydro under an enforced levellised supply regime they would still have to contend with the opportunity cost of wasting some water for alternative irrigation,etc with the Wivenhoes and Tumuts or invest in pumped seawater.

Truly levellised electricity with guaranteed despatchability would be agnostic as to their incorporation into any portfolio mix, unlike the situation we have now and LCOE would return to its rightful place in long term investment decision-making.

I’m afraid that’s not correct at all, so perhaps I didn’t make the point clearly enough in the article, or you are too focused on the “dispatchability” issue.

Different generation technologies with different balances of fixed and variable costs can yield changing LCOE relativities depending on assumed capacity utilization. The fact that LCOE is usually quoted for a single, assumed capacity factor for each technology makes it anything but “technology agnostic”.

Have you ever wondered why coal and CCGT are generally quoted as having much lower LCOE values than OCGT (or reciprocating engines), but in real electricity systems there is often plenty of the latter built despite this apparent cost disadvantage? It’s because OCGT economics are much more favourable for the low utilization roles which are inevitable because of the peakiness of demand and the need to cover other plant outages.

There seems to be a common misconception that OCGT plants are built primarily for technical reasons like fast start and ramping capabilities, but in large scale systems these are secondary to the economic driver which is lower capital cost – if something is only going to be used a few percent of the time you want the cheapest capital cost you can get and variable fuel costs are far less important.

LCOE should have a very limited role long term decision making since it unrealistically assumes a fixed or guaranteed capacity utilization.

I understand where you’re coming from here but in judging demand utilisation investment decisions fossil fuel plant investors know they have the capability at their fingertips to respond to various demands anytime. Unreliables don’t but have the imprimatur to dump on the system with extremely low variable costs.

As consumers we don’t want to know that a Tesla battery plus Hornsdale wind might be able to supply 100MW of power for an hour or so but what can Hornsdale supply for a whole week if called upon, let alone 24/7 all year round? Without enforcing levellised supply criteria we are headed for a Green fallacy of composition whereby supply is at the mercy of the elements. My ancestors weren’t so stupid when bright sparks like Tesla and Edison came along to show them the light and the way.

The real issue that our market operators are yet to address is the need for firm capacity to back up renewables and a mechanism for charging for capacity.

A capacity market will do this and will not favour one technology over another.

People often point to the WA capacity market as a failure and yes it was as their capacity market mechanism could not work when most of the generators offering capacity were owned by one entity and their IMO had a completely flawed methodology for determining the cost of capacity.

You don’t have to back up renewables if you simply rule that all tenderers to the grid can only tender up to a maximum they can guarantee 24/7 all year round and leave them all to work it out. Obviously the unreliables would have to invest in storage of some kind to lift their average tender or else partner with thermal generators and pay them their just premium for that.

Yes I know it will need some phase in for existing unreliables to comply with that level playing field and to break it gently to all the mums and dads with their rooftop solar but somebody has to stand up and scream out loud about the elephant in the room. It’s been the greatest moronic Groupthink that got us here in the first place and if you want to get rid of thermal completely then we aint seen nothing yet with the cost of going all renewable with the storage necessary to make it reliable 24/7.

You just look at the graph and ask yourself what sort of battery or pumped hydro storage would they need to be able to tender 10% of installed capacity reliably 24/7 all year round and think about the cost-

http://anero.id/energy/wind-energy/2017/june

I would have thought that anyone familiar with electricity costs would already know that only the full system cost is a valid measure. Why does the matter need to be raised? Obviously because there are many cost claims circulating that are simply fake. I hope the next article exposes them. And by the way it is not valid to ‘factor in’ externalities like the damage cost of carbon emissions by adding them to the LCOE. The ‘externality adder’ has different purposes, especially in helping decide what are the best investments to make in reducing damage.

“..it is not valid to ‘factor in’ externalities like the damage cost of carbon emissions by adding them to the LCOE.”

Well it can be that we choose to impose a CO2 tax as a truer social cost on the system provided it bears equally on all tenderer’s CO2 inputs but the level of tax for such a level playing field is one of considerable conjecture.

I’d argue that also bears implicitly on your your other observation- “I would have thought that anyone familiar with electricity costs would already know that only the full system cost is a valid measure”. That’s why I’d argue you can’t possibly have a full system cost allocated fairly amongst all the tenderers without the level playing field of despatchability as well and so far that’s not the case. Finkel recognised that with his recommendation about despatchability for any future unreliables but I’d suggest he was politically silent about all the existing ones for the obvious. Too many red faces admitting they got it wrong.

Interesting, I like it but it is very involved.

Lets assume a very simple system and look at the effect of the RET

In the country of Utopia electric power is produced 50% by wind power and 50% by black coal over a 12 month time period

.

Black Coal produces electricity at $55 per megawatt, but due to the RET they have to pay the wind power producers $85 per megawatt, for a clean energy certificate, so their cost is $55 + $85 + 10% profit, + $154 per Megawatt.

Wind power gets the going rate at that time plus the $85 from the Black Coal Generator.

Thus the power end users Manufacturers , Commercial users and Householdsare paying higher prices than they did previously

Wind powers producers are very happy because they get paid when ever the wind is blowing and then get paid again by the Black Coal Electricity Generators

Not what I would call a ‘level playing field’

Please show me where me I am wrong, it is “doing my head in” and my Electricity Bill is just going up and up and up and up.

Well you might assume the $55 cost for black coal includes a return on capital so make the 10% the GST if that helps.

“my understanding is that the round-trip efficiency of the pumping-to-generation process is not as great as it might initially seem”

I’d like to know more about that. Has it been the subject of a subsequent article?

Not a specific article, Mark – however there was discussion about several aspects of storage following the release of the Generator Report Card 2018 during the middle of last year:

https://wattclarity.com.au/articles/2019/09/some-observations-about-storage/

With the statistics being updated and extended with the Generator Statistical Digest 2019 we have the opportunity to delve further, and AEMO’s Quarterly Insights reports are another good reference.