As has been widely reported, (see here for additional details) an equipment failure and subsequent spot fires in the Torrens Island B switchyard caused the disconnection of three of Torrens Island Power Station’s four ‘B’ units, each of 200 MW capacity, last Friday 3 March. Prior to the incident the three tripped units were producing just over 400 MW, around 17% of the South Australia’s total electricity demand. In addition, the same incident somehow seems to have caused the near simultaneous tripping of the nearby Pelican Point Power Station which was producing about 220 MW.

Referring back to AEMO’s investigation of the SA Black System incident on 28 September last year, the proximate cause identified for the system collapse on that day was the loss of 456 MW of windfarm generation following a series of faults and voltage dips caused by severe storm damage to the transmission system.

This raises the obvious question of why the system was able to withstand the loss of significantly more generation on Friday last week, apparently without any widespread load shedding, than the loss which triggered a complete system shutdown last September.

Even Friday’s near-miss has already led to fairly predictable but unhelpful finger-pointing and political posturing. A quick search on Twitter will find you some.

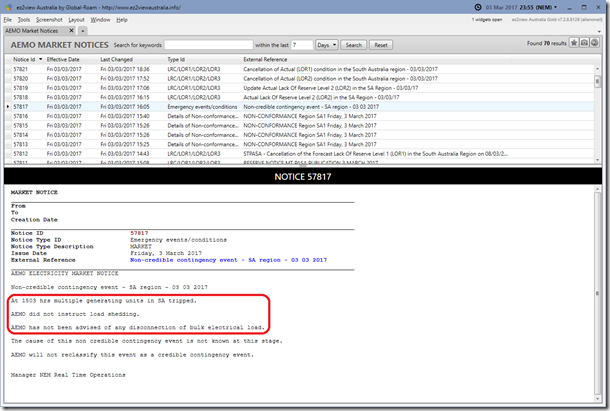

In this post I’ll look a bit more closely at the sequence of events that is discernible from AEMO’s actual market data, remembering that full investigation into causes and the forensic detail of what happened is ongoing.

Immediately before the event

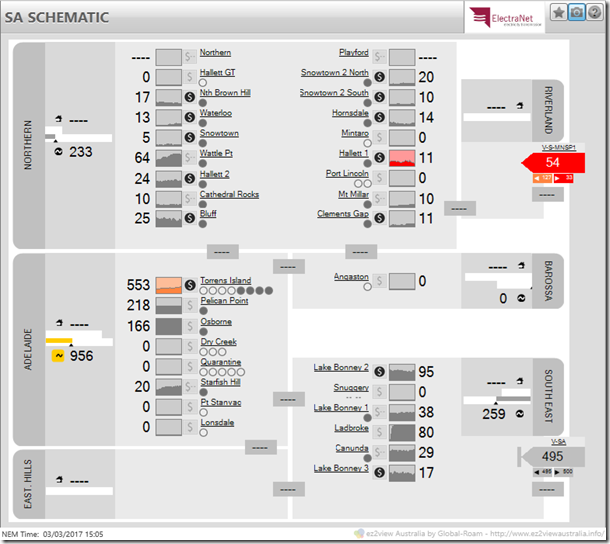

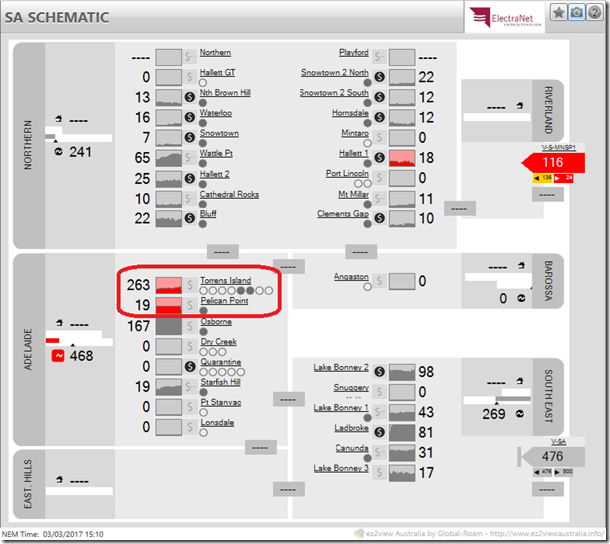

Using ez2view’s Time Travel functionality to view the state of the South Australian region immediately before the event (this SA Schematic snapshot from Dispatch Interval (DI) ending 15:05 AEST shows generator outputs at the beginning of the 5 minute interval, ie at 15:00 AEST or 3:30pm Adelaide daylight saving time):

we can see that four Torrens Island B units were running – the four filled circles underneath the station name above – each producing just under 140 MW. Pelican Point’s output of 218 MW is shown just below.

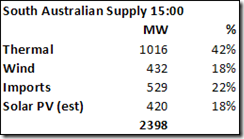

In summary, the aggregate supply mix in SA at this time was as follows:

A few points about these numbers for those who are looking closely:

- Wind includes both semi-scheduled and non-scheduled generators;

- Imports are based on actual metered interconnector flows at 15:00, not the end-of-period dispatch targets for 15:05 shown against Heywood (V-SA) and Murraylink (V-S-MNSP1) in the ez2view screenshot above;

- Solar PV (est) is based on AEMO’s after-the-fact half hourly estimates of small scale rooftop PV production – I’ve averaged the values given by AEMO for the two intervals 14:30-15:00 and 15:00-15:30;

- For all these reasons the total supply number shown differs from AEMO’s ‘Scheduled Demand’ value for DI 15:05, but gives a better reflection of total actual supply just before the incident.

5 minutes later

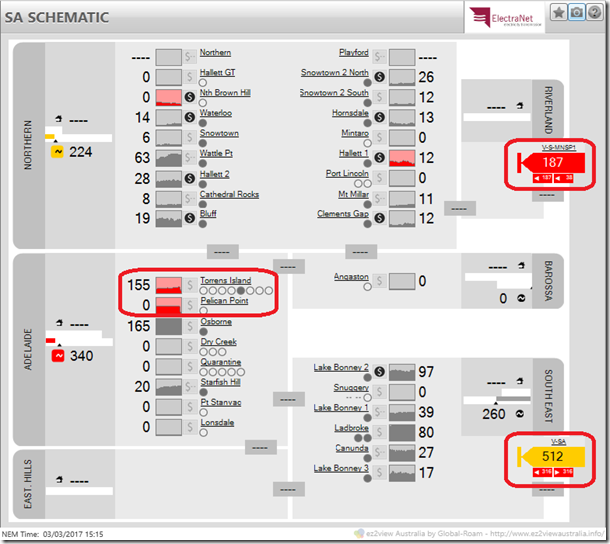

Stepping forward to DI 15:10, the 5 minutes commencing at 15:05 – just two minutes after the incident time of 15:03 reported in AEMO’s Market Notice 57817 as shown above, the SA Schematic view shows this:

We see, in comparison with the previous schematic view, that:

- Two Torrens Island ‘B’ units have tripped – the last two empty circles below the station name, ie units B3 and B4, and station output has reduced by 290 MW;

- Pelican Point output has fallen to 19 MW from its previous 218 MW;

- despite this loss of nearly 500 MW of generation output, we can’t see obvious material changes in the other generator outputs or interconnector dispatch targets that are nearly large enough to compensate – what’s going on??

Another 5 minutes later

Hold that last question while we step forward one more 5 minute Dispatch Interval to show the situation at 15:10, the start time of DI ending 15:15

Now a third Torrens Island unit, B2, has dropped off, reducing station output a further 108 MW (net) leaving only one online unit, and Pelican Point output is zero.

We can also see higher interconnector targets than before, but for those doing the sums in their heads, not nearly high enough to offset the cumulative net loss of 621 MW of generation from Torrens Island and Pelican Point in the few minutes since the incident at 15:03. What’s the cause of this apparent discrepancy?

Part of the reason is that actual interconnector flows can and do differ significantly from AEMO’s dispatch targets. Often it’s the interconnectors – particularly non-controllable AC interconnectors like Heywood – that take up any differences between what AEMO schedules to occur in a NEM region over every 5 minute Dispatch Interval, and what actually happens. AEMO schedules on the basis of its 5 minute ahead demand forecast, calculates end-of-interval dispatch targets for generators according to current output levels, bids, constraints etc then electronically sends those dispatch instructions to generators’ control systems. As second-to-second variations in demand and generation output occur in the actual power system, a variety of mechanisms – principally ancillary services provided by certain generators – are used to maintain real time supply-demand balance. This can and does result in significant variations in actual outcomes from what AEMO’s schedule assumes will happen.

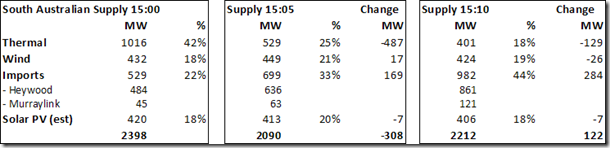

That’s why in the earlier supply mix table for 15:00 I used actual interconnector flows not targets – I’ve updated that table below to show how the mix – and total supply level – changed between 15:00 and 15:10. I’ve also broken out imports across the two separate interconnectors:

(The data for these tables are drawn from AEMO’s published market data, but can require a bit of extra manipulation to extract in comparison with standard views in many tools like ez2view)

‘Missing demand’ and very high interconnector flows may have “saved” the system

What first jumps out from the table above is that in the same interval that the first two Torrens Island B units tripped and Pelican Point lost most of its output – effectively somewhere between 15:03 and 15:05 – demand in South Australia must also have dropped by ~300 MW. Supply and demand must remain in real time balance in any electricity system, so absent gross measurement errors – and there is no obvious sign of these – the supply reduction of 307 MW shown at 15:05 must have corresponded with a matching reduction in power demand.

There have been no reports of general load shedding associated with the incident, and I have contacted both AEMO and SA Power Networks seeking an explanation – the latter has confirmed that there was no distribution system level general load shedding – neither directed (eg rolling blackouts like those initiated on February 8) nor automated (eg Under Frequency Load Shedding protection).

Since the load reduction must have occurred very rapidly between 15:03 and 15:05, and well before there were any general calls for the public to conserve electricity later that afternoon, it cannot have been the result of conscious actions by energy users to reduce usage. It’s also apparent that it was a relatively short-term drop, with ~120 MW of load returning by 15:10 (followed by another 105 MW by 15:15, not shown above). I’m afraid the reasons for this remain a mystery at this early stage – if any readers have a convincing explanation I’d be very glad to hear it!

What’s also obvious at 15:05 and especially 15:10 (as load picked up and another TIPS B unit went offline) is the very large increase in imports on the Heywood interconnector necessary to maintain supply-demand balance in South Australia. The only other controllable thermal generation remaining online at the time was a single TIPS B unit, the Osborne cogeneration facility and the Ladbroke Grove gas turbines in the South East of the state. The latter two stations were essentially at full output already, while the TIPS B1 unit had only about 70 MW spare capacity, which took 20 minutes to ramp up.

Other dispatchable generation started coming online from about 15:10 (principally 60 MW of diesel-fuelled capacity at Port Stanvac followed slightly later by gas turbines at Dry Creek and Quarantine), but in the immediate aftermath of the Torrens Island B and Pelican Point trips it was interconnector flows increasing to (at least) 861 MW on Heywood and by much smaller amounts on Murraylink that balanced the system.

That level of import across the Heywood interconnector is well outside its normal secure operating limits of 600-680 MW, and while those limits provide for short term excursions to higher levels than those to cater for defined contingencies, the level shown above is uncomfortably close to the transfer level of 890 MW reached just before separation from Victoria last September, quoted in AEMO’s report on the SA Black System event (see p45).

I should stress here that the detailed nature and sequence of events on the two days in question is very different, and especially that the Black System report makes it clear that it was not the absolute level of power flow itself across Heywood on 28 September 2016, but a more complex loss of synchronism / transient instability issue that (correctly) triggered separation of SA from Victoria and the consequent system black in SA.

However it remains true that on both days flows on Heywood were pushed well beyond secure operating limits, which are generally set to provide headroom for only “credible” contingencies such as the loss of the largest single generating unit in a region. Losing three Torrens Island B units and Pelican Point in quick succession is well beyond the scope of such credible contingencies.

Tentative Conclusions

I have no doubt at all that AEMO, Electranet and many others will be looking very closely at the root causes of this incident, particularly the reasons for the near-simultaneous trip of Pelican Point, its impacts, and its implications for system security in South Australia.

If the apparent loss of 300 MW of system load at around the same time is confirmed (and I can see no reason why it won’t), its nature and underlying cause will also be fascinating to understand – without that near-immediate relief on total supply requirements for South Australia, it is very hard to see how a trip of the Heywood interconnector (which would then have been attempting to transfer well over 1000 MW into SA) would not have occurred.

That in turn would have led with absolute certainty to another system black event, and heavens knows what next-day headlines and political witch-hunts to follow.

|

Allan O’Neil has worked in Australia’s wholesale energy markets since their creation in the mid-1990’s, in trading, risk management, forecasting and analytical roles with major NEM electricity and gas retail and generation companies. He is now an independent energy markets consultant, working with clients on projects across a spectrum of wholesale, retail, electricity and gas issues.You can view Allan’s LinkedIn profile here.

Allan will be regularly reviewing market events here on WattClarity. Allan has also begun providing an on-site educational service covering how spot prices are set in the NEM, and other important aspects of the physical electricity market – further details here. |

Perhaps the emergency capacity for the Heywood inter-connector should be up-rated. It’s already had last Friday’s stress test and passed. Currently the emergency level is specified on AEMO’s website as 701 MW for summer, 717 MW for autumn and spring, and 762 MW for winter. Surely a more sophisticated set of temperature-controlled limits could be applied, as on the Vic-NSW inter-connector.

I have only just signed up to get you email which is very informative and interesting.

While i have read what you say you do it is hard to get my head around someone wanting to do this without some kind of angle – you know the old saying that there is not free lunch.

Would you like to tell me a bit more about why you do what you do, which as I said I am very interesting.

Cheers,

Ken in Adelaide.

Drop in load was probably due to “load relief” – as network voltages fall, so too does demand – its the common Np and Nq indices power engineers are familiar with

So far as the “missing” load is concerned I wonder if consumer appliances, specifically modern inverter type air-conditioners, may have tripped on account of a sudden drop in voltage and then re-started a few minutes later?

I don’t know how these systems respond to sudden voltage fluctuations but if the load did indeed go “missing” and it wasn’t due to load shedding or a large industrial load being tripped then there aren’t many other possibilities given we’re talking about 300MW or so.

Losing four operational units to one transformer fire does not seem like ‘single failure proof’ design.

If no distribution level load shedding occurred, then the shed system load may have been directly connected to the HV grid and shed either automatically or manually by AEMO?

Directly connected HV would normally be big industrial loads. Does SA have ~300MW of those? Reconnecting those loads could have been done gradually as additional generation synchronised and loaded.

This will be due to a combination of issues.

The most significant reason probably is that the other generators both synchronous and non synchronous did not drop their skirts and run away. There was a lower percentage of non synchronous generation running than for the September event and the majority of the non synchronous generation was remote from the event and probably did not see any severe voltage or frequency fluctuations anyway. So it didn’t all trip as in the September event and the synchronous generation close to the event would be more forgiving.

The second is that the measurement is probably not correct – mismatched instantaneous readings from a transient event – and you would need to examine carefully the actual voltage and frequency fluctuations at various points in the network.

The indicated drop in power is not all that great at just over 12.5% of the load immediately prior to the event. There obviously was a significant drop in voltage – reports of lights dimming – so all resistive load will have immediately stopped pulling a fair bit of power.

Likely we would probably also be very surprised by the actual load that was shed and then reconnected quickly. Every UPS in the state would have flipped to battery (and all have probably had a service/new batteries since September had just identified all the flakey ones). Every capacitor in every electronic power supply gave up a bit of it’s spare store. Quite a few sensitive large loads probably did disconnect and then immediately reclose without any real impact on their connected plant. Some loads probably stayed off but have not made the press because they regularly do that during such events. The reasons that these did not become “press events” is that users could immediately go about restarting them so there was no great damage – it just becomes one of the regular unscheduled events that operators are trained to handle

So in summary there probably was a fairly significant drop in load but it did not become an “event” because the network stayed up – probably thanks to the greater percentage of online synchronous generation than September. And the remoteness of the non synchronous generation meant it was more isolated from the event.

The more you look at the September event the more you begin to wonder if a few nutters distributed though the management systems (AEMO included) were looking to the upcoming wind event as some sort of opportunity to push the barrow of wind capability. It begins to look as if they were almost trying to break some record for the percentage contribution of wind to the SA network – perhaps trying to get to 100% of SA power from wind and took their eye off their prime roles of actually keeping the power on. Even before the event everyone would have know that too much non synchronous generation was a risk – but everyone seemed to ignore that risk. And high winds are always a network risk even if they only bring down a few isolated conductors and tree limbs.

But in September those in the know seemed to deliberately ignore the risks and plodded on blindly with a commitment to loads of wind. This recent event shows the stark contrast in the outcomes. Although they didn’t have the choice this time as the output from the wind and solar resources was relatively low at the time.

A high voltage fault at a large generating station is one of the worst due to fault infeed availability. Hence, depending of the nature of the fault, strange protection system operations can happen. Losing multiple generators during this type of event is not unheard at all. A fault becoming a 3-phase fault with possibly delayed clearing is very extreme.

All I know about this event is that some piece of equipment probably failed explosively. It happens. With a close-in unbalanced 3-phase fault, generator neutral currents can cause their protection systems to operate. If the failed component impaired protection systems, resulting in a slow clearing of the fault, the event will become quite severe over a large part of the grid. Compressors in air conditioners and fridges will see a voltage loss and kick in restart delay timers/breakers for a period of time. Other voltage sensitive equipment and synchronous motors disconnect and also have a delayed restart. Back in the day, we used to call it “load shaken off” for the lack of a technical term, so you get the idea. Unplanned load cycling.

Power systems cannot be designed for clean, controlled protection for every type of event. I am unfamiliar with the SA protection requirements but in North America, for instance, most areas are designed for proper protection for either a 3-phase fault or a single phase fault with delayed clearing. Hence you can have events that exceed design capabilities but that have a very low probability. Perhaps this was indeed a 3-phase fault with delayed clearing.

Thanks everyone for the comments, some great insights there.

Some specific responses to people’s comments:

Malcolm – I’m not quite sure which piece of data you are referring to (a reference / link would be good), but the numbers look close to the per-circuit thermal ratings (MVA) on the two 275kV Heywood (Vic) to South East (SA) transmission lines. They might represent the highest levels AEMO would be prepared to schedule Heywood interconnector flows to in an emergency (and only for a very short time, << 15 minutes) but there may be other limitations and in any case would not leave the system in a secure operating state. In general the maximum secure transfer capability of the interconnection is quoted at 600-650 MW but frequently other constraints limit imports to considerably lower levels.

Ken – yes there’s a commercial angle to this but it’s primarily about trying to demystify the workings of the market, and you can see from the other commenters’ input that I learn plenty from doing it as well.

Andrew – that form of very short term load relief on frequency / voltage dips certainly exists and assists with riding through the immediate aftermath, but doesn’t persist for the 10-20 minutes we saw last Friday. I think what happened may be more along the lines suggested by Shaun, Michael & Tom.

Shaun – I think you, Michael, & Tom are probably on the right track – it will be interesting to read what AEMO says. Your comment about inverter-connected loads also made me reflect on the large amount of inverter-connected PV generation – had there been a large scale dropout of that generation last Friday then supply-demand balance would have been that much harder to sustain over the interconnector!

PaulD – I don’t believe there is that much large, direct connected load in SA available for contingencies like this (whereas in other mainland states smelters can play this role), and in any case I’m sure we’d have heard about it if (say) Olympic Dam had tripped.

Michael – Great comments, thank you. Relative to the September event, while there was certainly a lot less non-synchronous generation running on Friday, remember that in September it was the loss of nearly half that generation that led to the collapse. Last Friday it was well over half of the running synchronous stuff that fell off. If you take the pre-event September outputs by generation type, then adjust for the loss of 465 MW of wind, you end up quantities of thermal and large-scale non-synchronous output that are actually pretty close to the mix in SA last Friday once the three TIPS units and Pelican Point had tripped – around 400 MW of online thermal, 4-500 MW of non-synchronous wind, and nearly 1000 MW over the interconnectors. On my reading of the AEMO System Black report it wasn’t then a trip of the remaining online windfarms that precipitated collapse but tripping of the Heywood interconnector on loss-of-synchronism protection. I know what happens in the immediate aftermath of a big system disturbance is nowhere near as simple as my MW output comparison here but it does point to the load response last Friday (and clearly no loss-of-synchronism event) as being pretty important. And thanks for the insights into load behaviour after a big voltage dip – which would have been much closer to the load centre last Friday than it was in September.

All I’ll say on your thoughts on operation of the system on Sep 28 is that 1) there had already been plenty of previous occasions where wind output exceeded SA system demand so I really doubt any “nutters” were going for some sort of record and 2) I remain amazed that more than 5 months after the Black System event there has been almost no sign of any independent review of how the system was operated on the day.

Tom – thank you also, some of your comments address matters far outside my range of technical competence, but again the insights into behaviour of connected loads are really helpful in understanding why the system was able to ride through.

Allan

Below is a link to the file of AEMO line ratings I referred to. My reading of it (rows 11229-11246) is that there are 2 circuits between the South-East terminal station in SA and Heywood. The summer rating for each of 2 lines is 597 MW under normal conditions, and 701 MW for emergencies.The normal rating for the Heywood transformers is 3 x 370 MW (rows 3552-3569). Both of these are ~1100 MW and much higher than the publicised target rating of 680 MW.

In the file, the ratings seem to bear little relationship to the underlying physics or appearance of the line. In some State ratings are based on seasons, while in Victoria it is temperature. Some lines with few conductor cables seem to have disproportionately high ratings (eg Ballarat-Bendigo 347 MW at 20 degrees). The ratings seem to have a stronger roots in history than physics, and managed with excessive conservatism. I suspect that the best investment in the NEM would be to re-rate the inter-connectors based on sound physics, with dynamic ratings calculated from air temperature, wind speed, and transformer temperature. Re-rating won’t necessarily be cost-free, because it may require stress tests with helicopters flying the length of the line using thermal imaging cameras.

https://www.aemo.com.au/Electricity/National-Electricity-Market-NEM/Data/Network-Data/Transmission-Equipment-Ratings

To interpret it needs to following diagram

https://aemo.com.au/-/media/Files/Electricity/NEM/Planning_and_Forecasting/Maps/Network-Diagrams-pdf.pdf

Allan, the numbers I have got for the September event has Wind providing 45% and Gas 10% of SA load prior to the event. For this event you have Wind only providing 18% and while Gas is at 42% prior to the event – quite a different generation mix. Solar at 13%/18% (last time vs this time) is pretty much the same. Imports at 529MW/613MW (last time vs this time) did give more headroom this time for imports.

I don’t think that September can be split into two separate events such as failure of wind and then separation from the NEM as separate independent events.

Michael – yep I accept that it’s artificial to split the September event into wind failure followed by Heywood trip; however the 456 MW of wind lost did occur in two main tranches – about 200 MW triggered by a voltage dip seven seconds before the interconnector opened, then another 260-odd MW less than a second beforehand, after a sixth major voltage dip. AEMO’s comment was “Voltage collapse began after clearance of the sixth voltage disturbance and sustained generation reduction of 260 MW associated with three wind farms (Groups B and C). This confirms system voltages were stable and would have remained stable if angular instability had been avoided” – see p39 of the most recent System Black report. So I think the event could be at least be split pre- and post- that 6th voltage dip (which was due to another transmission fault – see Table 6 / p26).

As the same report makes clear, the windfarm runback / disconnection was due to programmed control system actions following the number of ride-throughs exceeding pre-set limits, rather than failure to ride through the voltage dips themselves. I think that may be important in thinking about how that generation handled the primary faults on that day.

Your percentages for gas and solar generation prior the black system don’t look right at all, see p18 / Fig 1 showing 330 MW of thermal (~18%) and p24 where solar PV is estimated at 50MW (<3%).

Finally and most importantly, gas may have been 42% prior to last week's event but with more than half that tripping off, the percentage available to then respond was well under 20% of pre-event system demand. I still think that if demand had not fallen substantially, the outcome last Friday may have been quite different.

Allan

@Allan,

It looks to me like they have fitted UVLS (note: not UFLS) to the big industrial loads since the September blackout. It seems to have saved the system.

You state :-

‘SA Power Networks seeking an explanation – the latter has confirmed that there was no distribution system level general load shedding’

Perhaps the next question for SAPN is, “Did the total distribution demand vary over the timespan of the incident and by how much?’

Do we have to postulate that the solar PV stayed attached and the air-conditioners, fridges and freezers tripped because of a voltage dip? I doubt you would notice a ten minute break in air-conditioner operation; it would just look like the thermostat operating. However, …

… UFLS and UVLS:

The system frequency in SA would not vary much during the incident because it was attached to the rest of the NEM grid. It is this fact that makes frequency response in SA ineffective with the Heywood interconnector operating.

I know nothing about UVLS systems in SA but maybe large loads have them fitted? This looks relevant :-

‘Kill switch’ for mines to save electricity grid – The Australian

http://www.theaustralian.com.au/national…for…/9f99512ea64c98b7ef6975f49e050235

4 Oct 2016 – BHP Billiton’s Olympic Dam mine in South Australia. … “The under-voltage load shedding scheme … has been installed as one of the interim …

[PDF]SA Events – update report – Australian Energy Market Operator

http://www.aemo.com.au/-/media/…/AEMO_19-October-2016_SA-UPDATE-REPORT.pdf

19 Oct 2016 – Report – Black System Event in South Australia on 28 September 2016 and …… Availability of under-voltage load shedding at various locations, …

‘the grid manager has been directed to trip a ‘kill switch’ …

Looks like it works!

https://stopthesethings.com/2016/10/04/south-australias-wind-power-debacle-killing-miners-mineral-processors-scarce-jobs/comment-page-1/

Alas. The latest ‘plan’ from its vapid Premier, Jay Weatherill is aimed at keeping the lights on in Adelaide (where the voters are), while cutting power to its miners in the North of the State.

In order to prevent the grid from collapsing every time there’s a total and totally unpredictable collapse in wind power output (see above and our posts here and here), the grid manager has been directed to trip a ‘kill switch’, that will cut power to SA’s two biggest mining operations: BHP Billiton’s Olympic Dam and OZ Mineral’s Prominent Hill.

“The under-voltage load shedding scheme … has been installed as one of the interim measures that will eliminate the risk of voltage collapse spreading throughout the transmission system,” the report said. “This risk if not addressed had the potential to cause widespread power outages under certain network conditions.”

Did Whyalla spin up its gas turbine as well?

‘An update for Whyalla says they have managed to avoid disaster there, courtesy of their gas turbine but they’ll probably still be taking $30 million in losses from the blackout.

http://www.adelaidenow.com.au/news/the-whyalla-steelworks-has-averted-a-worstcase-blast-furnace-shutdown-but-arrium-expects-a-30-million-hit/news-story/a008ff502c41f01b318905b115e6f031‘

Although there was no distribution level shedding, I suspect there will have been automatic HV underfrequency relay trips at the transmission level.

The big difference between this event and last September was, because the interconnector had some reserve capacity and remained on, the frequency held up sufficiently to allow the underfrequency relays to operate and shed that required 300MW or so.

In September, the frequency fell 7HZ in one second, too fast for the underfrequency relays to shed load.

Graeme – I’ve seen some high resolution data on system frequency – not at all SA locations admittedly – and it doesn’t seem to show falls to anywhere near as low as 49Hz, which is the trigger point for the first blocks of UFLS. Unless you mean direct-connected transmission customers (and I believe there are < 100 MW of such load), other loads on the transmission system are distribution offtake feeders etc, and had any of those been tripped by UFLS action then SAPN would certainly have been aware of that. SAPN's comment to me was that they believe the load reduction was along the lines of what Tom referred to above as "load shaken off" by the system voltage disturbance.

But we'll have to wait for a detailed report on this to be sure.

Allan

As an experiment, I tried flicking the supply switch on my running heat pump. It stopped, unsurprisingly, and did not restart for several minutes. It did not wait for the house air temperature to drop to its normal minimum temperature, so the restart was not the normal thermostat operating.

The pump manual says :-

Frequency ON-OFF Max 4 times/h

Inching protection 10 minutes

ON and OFF interval 3 minutes

Power source voltage +/-10%

A sufficient (exact magnitude unknown) voltage dip that reached the extremities of the system would seem to be able to stop all the running air-conditioners and the automatic restarts are postponed for several minutes by design. Would this help to explain some of the ‘missing demand’ and the subsequent demand recovery? The solar PV presumably remained attached.

I find the article and discussion very intriguing. Particularly intriguing is the very plausible notion that there is a high level of “invisible” load shedding provided by inverter powered compressors. Something that occurs to me is that one of the benefits that’s claimed for large battery installations is rapid voltage support and this may be contrary to this load shedding effect. I wonder if the relatively slow response of synchronous generators turns out to be an advantage here… because it allows enough time for the inverters to sense the disturbance and switch off. Of course the inverters of the battery installation can be set to whatever is needed, and it seems it might be good if they are set to match a synchronous generator response, rather than aiming for something “better” that would prevent voltage collapse. There’s probably a middle ground here of enough voltage support so that it does not collapse too deeply causing generation and transmission to drop out, and yet enough collapse that inverter powered devices can can sense the drop and switch off.

I suppose the action of the inverters is designed to protect the device, not the grid, but it seems like there would be a good opportunity to use this phenomenon deliberately to protect the grid.

@chris

I have given thought to your suggestion that inverter-driven heat pump tripping is a useable affect. IF that was demonstrated to be the case, it could be an answer to SA’s present woes that are basically driven by heat pumps (OK, wind power generation collapse matters as well) in hot weather and in particular by large numbers being turned on at much the same time of day.

Because all the houses are hot by late afternoon in summer, starting a domestic heat pump makes it run continuously at high load until the house internal temperature drops to the minimum set point. It is a crazy way to run a heat pump. Pumps should be left on all the time on thermostat control with the set point altered a bit when the the house occupants are not there.

That is the way I run my heat pump. It is switched on in September, off in April and runs under thermostat control, drawing 270 to 350 watts when running; overnight the set point is dropped 2C. Done that way a small pump suffices to heat (in the UK) a whole house, except for when outside temperatures dip to freezing. A small pump is not only cheaper than a large one but also more efficient, smaller and less noisy and critically uses far less peak time electricity.

Your suggestion would be a form of fast-acting demand management with a guarantee that the pumps do not restart for a few minutes. Nobody should be that put out that their heat pump is stopped for a few minutes, provided it is not damaged by this being done repeatedly. I suppose the awkward squad might fit surge arresters?

http://digital-library.theiet.org/content/journals/10.1049/joe.2014.0180

In this study, results from testing six heat-pumps are presented to assess their performance at various voltages and hence their impact on voltage stability.

…

Note that all units continued to operate down to a voltage of 0.7 pu or below.

Heat-pump performance: voltage dip/sag, under-voltage and over-voltage

Table 1

under-voltage cut-off, pu

good (0.7)

low (0.52)

low (0.55)

moderate (0.6/0.68)

very low (0.24)

low (0.58/0.54)

Analysis of results obtained indicates that all units have a reasonably well-defined under-voltage cut-out (and cut-in) level. However, for many of the models this is set at a surprisingly low voltage.

All five units fell to low power operation after the transient and resumed their previous operating conditions after their normal start-up delay (typically 1–3 min).

http://www.abc.net.au/news/2017-03-15/aemo-report-torrens-island-fire-almost-second-statewide-blackout/8357316

‘The market operator believes the rapid change might have occurred as rooftop solar photovoltaic systems tripped out during the disturbance but then reconnected.

AEMO recorded a 400MW drop in energy demand because of the incident.’

Surely, a trip of solar PV generation would be seen by the grid as an INCREASE in demand? Try working the problem again and suggest it was the air-conditioners that tripped and restarted later under micro-processor control?

I have checked the underlying report and the problem is there. One of the recommendations is to check the solar PV performance under grid transients but no mention of the air-conditioners.

Fault at Torrens Island switchyard – AEMO

https://www.aemo.com.au/-/media/…/2017/Report-SA-on-3-March-2017.pdf

FAULT AT TORRENS ISLAND POWER STATION SWITCHYARD AND LOSS OF MULTIPLE GENERATING UNITS,. 3 MARCH 2017. Australian …

As PaulD has picked up, AEMO released its initial report into this event a few days ago.

It provides a lot more useful detail on the nature and sequence of the faults that led to the TIPS B and Pelican Point trips. It also confirms that there was no underfrequency load shedding, but that there was a sharp drop in demand immediately after the first faults / trips, and it was probably this that prevented another trip of the Heywood interconnector which would inevitably have led to another System Black in SA.

As it was the interconnector went uncomfortably near breaching the (revised) triggers for Loss of Synchronism protection – see p12: Based on the above, AEMO believes this event came very close to tripping the Heywood Interconnector.

Strangely there is no discussion in the report of the reasons for the 400 MW loss of underlying demand – offset initially by what AEMO believes was loss of 150 MW solar PV generation yielding a net 250 MW demand drop (see Figure 3 in the AEMO report). I’ve seen subsequent comments attributed to “an AEMO spokesman” relating the underlying demand drop to the “natural response of modern electronic equipment” to low voltages caused by the faults, and stating that “AEMO will develop better modelling of load and rooftop PV response to severe voltage disturbances, to fully understand the implications for power system security.

Just as well – if the numbers had been the other way around, 150 MW loss of load and 400 MW loss of PV generation – then the lights would definitely have gone out!

I don’t where this has got us.

The situations for September 28 and March 3 still seem very similar, so why didn’t the system go black on March 3.

Fig 7 of the March 3 AEMO report shows a very different impedance trajectory between the two events and that is why Heywood didn’t separate but why the difference in the trajectory?

There was slightly more headroom on the Heywood Interconnector for March 3 but peak flows looked very similar.

It is clear that during the March 3 event there was considerable load shedding that seems to be just a natural function of the connected devices. But why didn’t the same shedding happen during the September event. Was there something in the way the wind farms went off that masked the voltage drop at users and so the same unintentional load shedding did not occur?

The other interesting thing appears to be the reaction of the solar PV systems. The solar systems are happy to drop off but leave the load they were supplying still on line. This seems to have generated an instantaneous hit of 150MW to the load which you would have expected to make the whole state system more susceptible to tripping. This doesn’t seem to be a good idea.

Michael

It’s frustrating that AEMO weren’t able to comment on the reasons why significant load tripped on Mar 3 but not on Sep 28. However the relevant charts in AEMO’s incident reports show that the voltage dips near Adelaide during the March incident were a lot larger than those on the black system day. The nature of the loads would have been different too (more aircon load in March).

On the solar PV question, it’s possible that (some) load associated with those PV systems may have actually tripped – AEMO’s report doesn’t say either way. If you are saying that solar PV systems ought to be set up so that the inverter tripping causes the entire customer supply at that point to disconnect then that seems a pretty blunt approach – nor likely to be practicable.

Allan

I would be very interested to understand what caused the difference in voltage responses – September 28 lost 456MW of wind generation and March 3 lost 600MW of thermal, but the system response was so different. What caused that? The March 3 trips were at transformer level while the September 28 trips were at generator level – does that give us a different response?

On the PV response – if I was a non PV household in Adelaide I would be very pissed off to find that my neighbour (who had installed PV to save money on his power bill) shut down his PV system to protect it and decided instead to pull their whole load from the “high priced” network and that additional load helped pulled the whole network down. I would have thought it would be relatively simple, and reasonable, to trip a householders whole supply in the event that their PV system thought it was unsafe to be connected to the network. I sort of expected that would be the normal response if the PV system was feeding into the network.

It is also a matter of equity. Should users who are trying to distance themselves from centralised generation and network distribution (and save money) be given equal access to that network in the event of an emergency to the extent of compromising that network?

There were two close calls on the Heywood interconnector more than six minutes apart: The initial fault sequence is given as :-

15:00 Heywood 484MW

15:03:46.262 Fault 1: TIPS B4 -130MW tripped

15:03:46.364 PP GT11 -150MW tripped PP ST starts runback

15:03:46.920 Fault 2: 275kV west busbar fault

15:03:47.300 Heywood Max load 962.9MW

15:03:47.812 Fault 3: TIPS B3 -130MW tripped

15:05 Heywood 636MW TIPS B2 starts runback

15:05:15 PP ST tripped -70MW over 89 seconds

15.07:34 TIPS B2 tripped -130MW over 02:34 minutes

15:10 Heywood 861MW, then 2nd peak at 918MW shortly afterwards

It was close to one second from incident start to the maximum load on the Heywood interconnector. There was a second, lower maximum on Heywood just after 15:10.

The first maximum (a 962.9-495=468MW rise) must therefore have been caused by :-

1: the grid transient,

2: the loss of TIPS B4 and PP GT11(a 280MW fall in generation in total) and

3: any loss of solar PV in the first second [1: suggests a delay to about two seconds], together with

4: any loss of demand in the first second.

It is possible/probable that 3: and 4: saved SA from another statewide blackout. Such a result would be pure serendipity. Fault 3 and the trip of TIPS B3 were too late to affect the first Heywood maximum load.

What is missing is an exact time for the 400MW loss of demand and any PV detachment; the report states ‘near simultaneous disconnection’. Was it before or after the Heywood maximum load at 15:03:47.300 or even spread either side of the maximum?

Fig4 has a horizontal scale of one hour and has not captured the first, sharp interconnector load peak of 962.9MW at 15:03:47.300.

It could be that much of the solar PV stayed attached for close to two seconds i.e. after the first Heywood interconnector peak load [1:].

Is this delay what saved the SA grid from another blackout?

How fast does the solar PV reconnect?

‘According to IEEE1547, DERs must wait 5 minutes before restarting automatically after disconnecting due to off-nominal voltage and frequency, provided that frequency and voltage have been restored within tolerance. … no provision for staggered restart’

1: Performance of Distributed Energy Resources During and … – NERC

http://www.nerc.com/pa/RAPA/ra/…/IVGTF17_PC_FinalDraft_December_clean.pdf

distribution system, and, in particular, with distributed wind and photovoltaic (PV) resources. ….. system are likely to trip on under voltage within ten cycles.

Includes references to IEEE Standard 1547 must-trip requirements e.g.

V<50% Max Clearing Time 0.16sec

50%<=V<88% MCT 2.0 sec

Fig 1: refers

Fig 8: shows A/Cs (air-conditioners) tripping on thermal protection and coming back.

Thanks very much PaulD, I hadn’t previously noticed that level of detail.

I can’t access the NERC paper you referenced at the moment but will keep trying.

In your dot point 3 are you suggesting that some of the PV systems could have disconnected within 0.2secs (10 cycles) and made up much of the difference between the rise of 468MW on the interconnector compared with the loss of only 280MW of generation. So 188MW of apparent extra load unaccounted for.

This is not shown on Fig 3 of the AEMO report but that could simply be because the timing of the SCADA could not pick up these rapid changes. This could mean that the 150MW rise we do see on Fig 3 is some of the remaining PV systems falling off. After all it appears there was about 420MW of solar on line at the time and so far we only have 188MW plus 150MW of “phantom” load.

We might get some idea of how fast the PV systems reconnected by also looking at Fig 3. The 150MW rise in load that AEMO has attributed to the PV systems, gradually disappears over the following 2 secs and the load keeps falling, but more slowly for a further 2 secs. So this could be PV systems coming back on rather than additional load simply falling off the system.

But all of this just makes the question of what was different between the March 3 event and the September 28 event all the more perplexing.